How can a robot understand what you want — and admit when it doesn’t know how? Our framework CLASP bridges the gap between data-hungry vision-language-action models and data-efficient imitation learning by combining Vision-Language Models with Task-Parameterized Kernelized Movement Primitives (TP-KMPs), requiring only 2–5 demonstrations per skill.

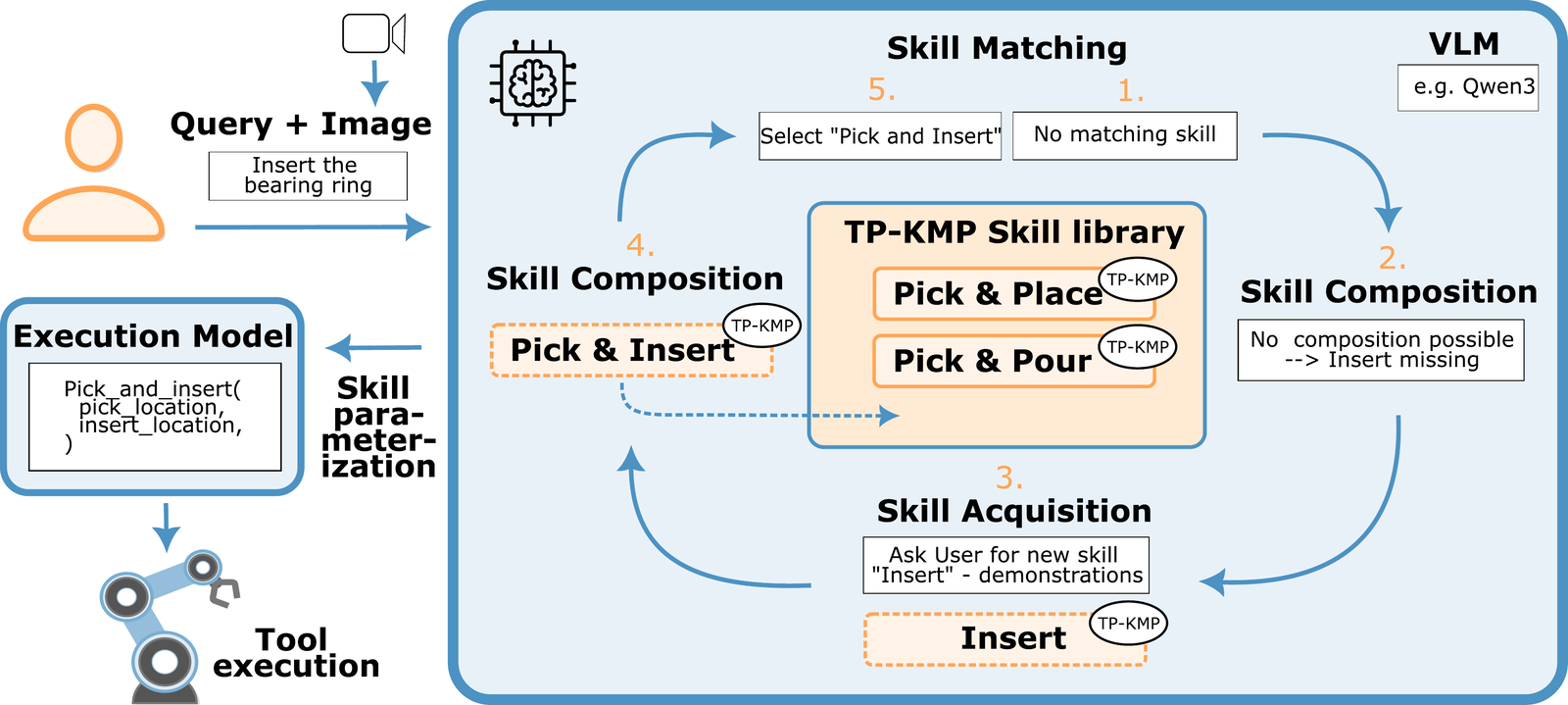

CLASP operates in three stages:

- Learning phase: Skills are acquired through kinesthetic teaching. The VLM analyzes the scene and automatically generates JSON schemas describing each skill’s parameters, preconditions, and semantics — no manual skill definition, no fine-tuning.

- Execution phase: Users issue natural language commands. The VLM matches them against the skill library and binds detected objects to task parameters. Novel behaviors are synthesized through covariance-weighted skill composition, where the TP-KMP covariance structures formally constrain which skill pairs can be meaningfully fused.

- Active skill acquisition: When neither an existing skill nor a valid composition satisfies a request, CLASP detects the capability gap and autonomously requests targeted demonstrations — the library grows, the demonstration budget does not.

Validated on bearing ring insertion tasks using a 7-DoF torque-controlled collaborative robot, demonstrating reliable execution, generalization to novel object configurations, and skill composition.